Y2038 and Millennium Bug, analysis of a disaster

If you’re old enough you’ve surely heard of this event: the Millennium Bug. No it isn’t some resonant media news, it was or rather is one of the most feared bugs in the computer history.

A time bug

During the nineties of the twentieth century a nasty voice started spreading in almost every part of the world: it was the Millennium Bug or Y2K. The rumor was about the inability of machines to tell the difference between the new century and the old century. Panic was assured to come soon after. They said that in the night between 31st December 1999 and 1st January 2000, the world as we knew it would’ve ended. Planes would’ve fallen, credit card would’ve had no more meaning, telephones would’ve stopped working, and last but not last nuclear weapons would’ve been unleashed. But why all of this, aren’t we using timestamp? I got you there, let me explain how we used to do things back in the time.

The problem of Y2K

Back in the time, we didn’t use to have large memories available, neither RAM nor storage. So most applications were optimized to take as less space as possible. Almost everything was built upon this holy rule, everything had to be as small as possible. Time was no exception. In that era, most applications used to store time in a fixed format: the last two digits of the century. So 1937, for example, could’ve been stored as 37 and then the application would add the century (19) part. But what would happen in the next century? That’s where the Millennium bug showed. Fast forward to that day: thanks to heavy investments, contingency planning and speed, we managed to escape the biggest bug of the last century: the world didn’t end. It wasn’t however dodged entirely and minor problems were reported.

Y2038: it isn’t over yet

As we faced the Millennium bug we became more cautious and forward thinking. But they say history is cyclic. The next Millennium Bug will affect UNIX timestamps. Timestamp is a method to encode time by starting the count from a particular event. UNIX timestamps start counting from the Unix Epoch: 1st January 1970 at midnight UTC. Now that time is stored inside a signed integer which can represent a number ranging from −(231) to 231−1 . But have you wondered when that number will be reached? The answer is 03:14:07 UTC on Tuesday, 19 January 2038. And that’s known as the Y2038 problem, the Millennium bug spiritual successor. To get a better idea take a look at this image:

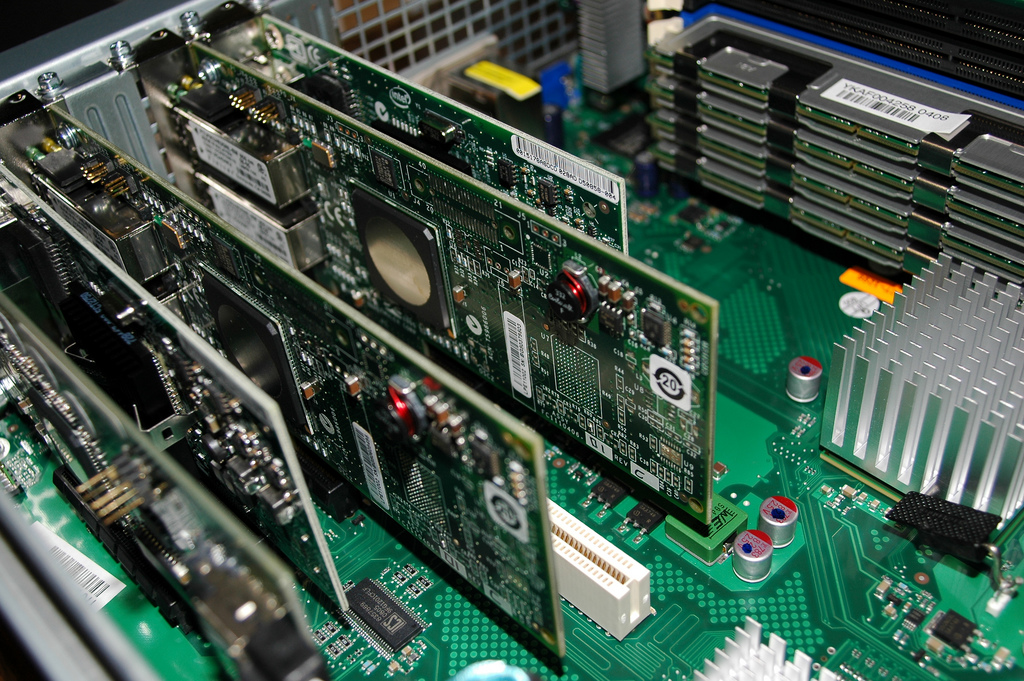

Now many solutions have been proposed like: changing the integer from signed to unsigned, but that will only postpone the problem. Another solution would be to switch from 32bit integer to 64bit integer (if the difference isn’t clear you can read this article), however that would create code incompatibility and all the embedded systems like routers, switches and so on would still suffer from this problem. In the end many hope the 64bit switch will happen before the problem will take place and become bug and that is happening right now however there will be problems for embedded systems, so at the end of the day we still have no universal solution.

Now many solutions have been proposed like: changing the integer from signed to unsigned, but that will only postpone the problem. Another solution would be to switch from 32bit integer to 64bit integer (if the difference isn’t clear you can read this article), however that would create code incompatibility and all the embedded systems like routers, switches and so on would still suffer from this problem. In the end many hope the 64bit switch will happen before the problem will take place and become bug and that is happening right now however there will be problems for embedded systems, so at the end of the day we still have no universal solution.

Image courtesy of r2hox

- 2020 A year in review for Marksei.com - 30 December 2020

- Red Hat pulls the kill switch on CentOS - 16 December 2020

- OpenZFS 2.0 released: unified ZFS for Linux and BSD - 9 December 2020

Recent Comments